VCF 9.1 does things a little differently, and VMware’s documentation on setting up an offline depot has lots of stuff us home-labbers don’t really use, need, nor want:

https://techdocs.broadcom.com/us/en/vmware-cis/vcf/vcf-9-0-and-later/9-1/lifecycle-management/binary-management-for-vmware-cloud-foundation/set-up-an-offline-depot-web-server-for-vmware-cloud-foundation.html

But setting up the offline depot isn’t all that bad…

Read on to see how I did it.

Luke

Upgrading the Homelab: Moving from Intel i5 Nodes to Ryzen 9 7900

Following up on my home lab blog post from nearly three years ago, man how time flies! The difference? I’ve actually ordered the below BOM!

I’ve been running my homelab on four identical machines for several years, and while they handled VMware 8.0u2 well enough, the hardware increasingly became the limiting factor. Between vSAN ESA’s RAM overhead and the modest core count of the i5-7600, it was time for a refresh.

This post covers the hardware transition: what I was running, the new BOM, why the upgrade was necessary, realistic power consumption estimates, and notes about PCIe lane realities. Software coverage will come in a follow-up article.

Current Cluster (The Old Setup)

Each of the four nodes included:

- Motherboard: MSI Z270 SLI

- CPU: Intel i5-7600

- RAM: 64 GB (4 × Timetec 16 GB)

- Boot Disk: SanDisk 240 GB SATA

- Data Drives: Intel Optane 900P 280 GB PCIe Add-In-Cards (AIC)

- NIC: Vogzone M.2 → 10GbE RJ45

Across the cluster I ran a total of 10 Intel Optane 900P AICs (two nodes with three, two nodes with two), which paired exceptionally well with vSAN. Unfortunately, vSAN ESA consumed nearly half of each node’s 64 GB, leaving little headroom for workloads. vSAN OSA used far less RAM, but it also failed to realize the full performance of the Optanes, so ESA remained the choice, despite the memory penalty.

ESXi boot screen stuck at “Shutting down firmware service… Using ‘simple offset’ UEFI RTS mapping policy Relocating modules and starting up the kernel…”

If your ESXi host isn’t showing you the expected DCUI, and instead you’re seeing something like the following:Shutting down firmware service...

Using 'simple offset' UEFI RTS mapping policy

Relocating modules and starting up the kernel...

Don’t be alarmed, this is actually somewhat normal.

How to deploy helm charts with VMware Aria Automation 8.18

TL;DR — I built a tiny Docker image that contains a Helm client and a small run script, then invoked that image from VMware Aria Automation 8.18 blueprints to deploy Helm charts (tested with Aria 8.18.2, VCF 5.2.1 and TKG). Code and Dockerfile on GitHub, links below:

RTFA…

I’ve been working with Intel on various AI projects, including benchmark testing and distributed training using Intel’s AMX Accelerator in their 4th Gen and later XEON CPUs, which gave me the opportunity to really dig deep into automating workflows. As we moved forward into deploying AI chatbots, Intel has their Open Platform Enterprise AI (OPEA) that I wanted to automate, but it’s deployed using Helm charts.

While VMware Aria Automation is deployed with helm internally, it doesn’t actually support deploying helm charts as a client. A quick search will tell you to use “ABX”, which is now simply ‘Actions’, but that’s not exactly straightforward either. The general consensus was to have a separate helm client that could run the helm commands. I tried to use pip to run them in Aria Automation’s actions runtime environment, but that failed … miserably (for me, especially, I felt like I was the failure). So I set out to find a better way…

Build my own custom Docker container

Script to generate JSON file from Excel Workbook for VMware Cloud Foundation

As you know, I prefer to use command line or API to do things. It’s faster, repeatable, and consistent.

The problem I have with VMware Cloud Foundation is the API or PowerVCF module won’t let you use the vcf-ems-deployment-parameter.xlsx parameter workbook, you must supply a JSON file.

I have another script I plan on releasing that is a fully automated deployment of VCF from start to finish, including monitoring ESXi deployment in the hardware OEM’s tooling, then deploys CloudBuilder, and leverages this portion to generate the JSON file, but I digress. That’s for another post.

In order to use this script you must have an existing CloudBuilder appliance running and know the admin & root passwords. It is a first edition, you must enter/change the variables to suit your environment. It doesn’t do any validation, will continue on error.

I’ve added the file to my GitHub VCF Preparation repo as generate-json.ps1 here: https://github.com/ThepHuck/VCF_Preparation/blob/main/generate-json.ps1

Thanks & happy scripting!

Script to add an NVMe Controller and Disks to a VMware VM

I was trying to find a way to add an NVMe controller & disks to a VM, which there doesn’t seem to be PowerCLI cmdlets to do this. If I missed them, please tell me!

I did some googling, didn’t find much. I checked the API and found endpoints for the vCenter, but not ESXi.

I’m targeting ESXi directly because I want to build a nested vSAN ESA environment, which is why I was trying to add an NVMe controller & disks.

A friend suggested using the code capture function of vCenter in the developer center, and that was enough to point me in the right direction.

With that, I created a script called New-NVMeDisk.ps1 and published it on GitHub. Feel free use it, just maybe link to this blog or my github if you use it in a script.

GitHub link: https://github.com/ThepHuck/ThepHuck/tree/master/New-NVMeDisk

Host prep scripts for deploying & redeploying VCF

Hello! Long time, no scripting! I’ve been blowing through VCF, deploying, redeploying, and built some scripts to help me with this. Sharing is caring, read on to see what I’ve done…

Building a home lab – Part 1 – Starting with CPU, Motherboard, PCIe Lanes and Bifurcation

Before we get started, a little info about this post

At a high level, I need to install five (5) PCIe NVMe SSDs into a homelab server. In this post I cover how CPU & motherboard all play a role in how & where these PCIe cards can and should be connected. I learned that simply having slots on the motherboard doesn’t mean they’re all capable of the same things. My research was eye-opening and really helped me understand the underlying architecture of the CPU, chipset, and manufacturer-specific motherboard connectivity. It’s a lot to digest at first, but I hope this provides some insight for others to learn from. Before I forget, the info below applies to server motherboards, too, and plays a key role in dual socket boards when only a single CPU is used.

Sometimes the hardest part of any daunting task is simply starting. I got some help from Intel here, though.

Played with PowerShell Parameter Sets and Dynamic Parameters

I’m writing a script to deploy Azure VMware Solution (AVS) and ran into a situation many of us likely have: Some parameters depend on other parameters.

I started with Parameter Sets where I did have several parameters participating in multiple Parameter Sets, but that didn’t work how I thought it would (or should).

Here’s what didn’t work:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

[CmdletBinding(DefaultParametersetName="cli")] param( [Parameter(ParameterSetName="cli")][Parameter(ParameterSetName="createVNET")][Parameter(Mandatory,ParameterSetName="VMInternet")][switch]$createVNET, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="createVNET")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetIPSubnet, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="createVNET")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetGatewaySubnet, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="createVNET")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetBastionSubnet, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="createVNET")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetManagementSubnet [Parameter(ParameterSetName="cli")][Parameter(ParameterSetName="VMInternet")][switch]$EnableVMInternet, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetFirewallSubnet, [Parameter(ParameterSetName="cli")][Parameter(Mandatory,ParameterSetName="VMInternet")][string]$vNetHubSubnet ) |

My intention was to have additional mandatory parameters based on additional switches. For instance, if you add “-createvNet”, the script needs four additional parameters. Also, if you used “-EnableVMInternet” without “-createvNET”, the script will also need to recognize that wasn’t supplied and make the parameters with it mandatory. Spoiler: that didn’t work.

ESXi host stuck entering Maintenance Mode

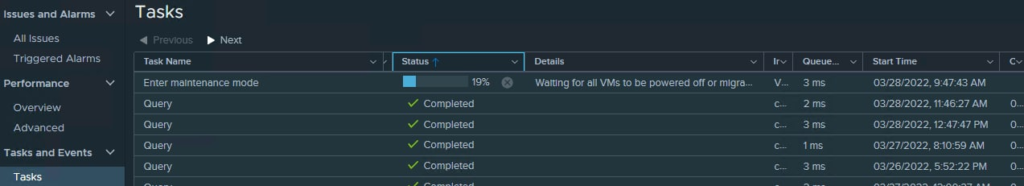

Maintenance Mode task hangs

I told one of my nodes to enter maintenance mode and it sat for overnight like this:

That screenshot was taken almost exactly 26 hours later. There were no running VMs on the host, nothing on the local datastore, no resyncing or rebuilding objects in vSAN, and lastly nearly zero IO on the network adapters.

I tried canceling the task, it would not cancel.

I rebooted the host, it came back into the cluster with that task still running.

I rebooted my vCenter, and that finally killed the task.