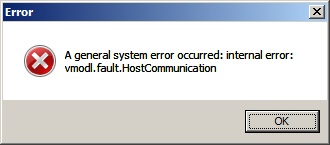

I just built a new environment and was greeted by this error. This fix will likely work on other Dell servers, and the settings may apply to other vendors.

High level is you need to set TPM2 Algorithm Selection to SHA256 in the BIOS. You MIGHT have to turn on Intel TXT, and then enable Secure Boot. This SHOULD NOT impact the ESXi installation, but there is a chance it might. Enabling Secure Boot on a machine with modified or unsigned files carries with it the risk of rendering your machine unbootable with the current ESXi installation.

So, here we go: