If your ESXi host isn’t showing you the expected DCUI, and instead you’re seeing something like the following:Shutting down firmware service...

Using 'simple offset' UEFI RTS mapping policy

Relocating modules and starting up the kernel...

Virtualization

How to deploy helm charts with VMware Aria Automation 8.18

TL;DR — I built a tiny Docker image that contains a Helm client and a small run script, then invoked that image from VMware Aria Automation 8.18 blueprints to deploy Helm charts (tested with Aria 8.18.2, VCF 5.2.1 and TKG). Code and Dockerfile on GitHub, links below:

RTFA…

I’ve been working with Intel on various AI projects, including benchmark testing and distributed training using Intel’s AMX Accelerator in their 4th Gen and later XEON CPUs, which gave me the opportunity to really dig deep into automating workflows. As we moved forward into deploying AI chatbots, Intel has their Open Platform Enterprise AI (OPEA) that I wanted to automate, but it’s deployed using Helm charts.

While VMware Aria Automation is deployed with helm internally, it doesn’t actually support deploying helm charts as a client. A quick search will tell you to use “ABX”, which is now simply ‘Actions’, but that’s not exactly straightforward either. The general consensus was to have a separate helm client that could run the helm commands. I tried to use pip to run them in Aria Automation’s actions runtime environment, but that failed … miserably (for me, especially, I felt like I was the failure). So I set out to find a better way…

Build my own custom Docker container

Script to add an NVMe Controller and Disks to a VMware VM

I was trying to find a way to add an NVMe controller & disks to a VM, which there doesn’t seem to be PowerCLI cmdlets to do this. If I missed them, please tell me!

I did some googling, didn’t find much. I checked the API and found endpoints for the vCenter, but not ESXi.

I’m targeting ESXi directly because I want to build a nested vSAN ESA environment, which is why I was trying to add an NVMe controller & disks.

A friend suggested using the code capture function of vCenter in the developer center, and that was enough to point me in the right direction.

With that, I created a script called New-NVMeDisk.ps1 and published it on GitHub. Feel free use it, just maybe link to this blog or my github if you use it in a script.

GitHub link: https://github.com/ThepHuck/ThepHuck/tree/master/New-NVMeDisk

Host prep scripts for deploying & redeploying VCF

Hello! Long time, no scripting! I’ve been blowing through VCF, deploying, redeploying, and built some scripts to help me with this. Sharing is caring, read on to see what I’ve done…

Building a home lab – Part 1 – Starting with CPU, Motherboard, PCIe Lanes and Bifurcation

Before we get started, a little info about this post

At a high level, I need to install five (5) PCIe NVMe SSDs into a homelab server. In this post I cover how CPU & motherboard all play a role in how & where these PCIe cards can and should be connected. I learned that simply having slots on the motherboard doesn’t mean they’re all capable of the same things. My research was eye-opening and really helped me understand the underlying architecture of the CPU, chipset, and manufacturer-specific motherboard connectivity. It’s a lot to digest at first, but I hope this provides some insight for others to learn from. Before I forget, the info below applies to server motherboards, too, and plays a key role in dual socket boards when only a single CPU is used.

Sometimes the hardest part of any daunting task is simply starting. I got some help from Intel here, though.

ESXi host stuck entering Maintenance Mode

Maintenance Mode task hangs

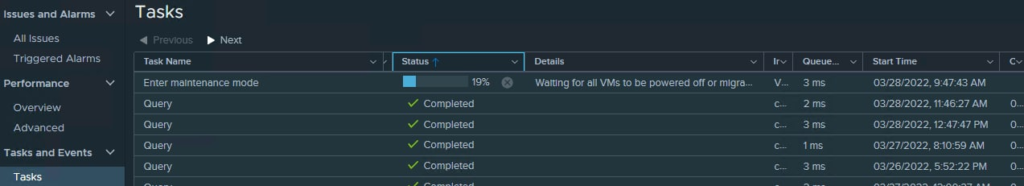

I told one of my nodes to enter maintenance mode and it sat for overnight like this:

That screenshot was taken almost exactly 26 hours later. There were no running VMs on the host, nothing on the local datastore, no resyncing or rebuilding objects in vSAN, and lastly nearly zero IO on the network adapters.

I tried canceling the task, it would not cancel.

I rebooted the host, it came back into the cluster with that task still running.

I rebooted my vCenter, and that finally killed the task.

How to bypass BAD PASSWORD: it is based on a dictionary word for vCenter VCSA root account

Today I am midway through setting up my lab and realized the reason VMware Cloud Foundation (VCF) is failing is because I set the wrong password in my JSON file for the root account on my vCenter appliance.

No big deal, right? Just SSH in and change it. I tried, and got this:

|

1 2 3 4 |

New password: BAD PASSWORD: it is based on a dictionary word passwd: Authentication token manipulation error passwd: password unchanged |

The bypass was actually easy. Presumably you’re already SSH’d in as root, so you just need to edit /etc/pam.d/system-password

|

1 2 3 4 5 6 7 8 |

# Begin /etc/pam.d/system-password # use sha512 hash for encryption, use shadow, and try to use any previously # defined authentication token (chosen password) set by any prior module password requisite pam_cracklib.so dcredit=-1 ucredit=-1 lcredit=-1 ocredit=-1 minlen=6 difok=4 enforce_for_root password required pam_pwhistory.so debug use_authtok enforce_for_root remember=5 password required pam_unix.so sha512 use_authtok shadow try_first_pass # End /etc/pam.d/system-password |

Remove enforce_for_root from the first line with pam_cracklib.so. Save the file, no need to restart any services, and retry passwd.

|

1 2 3 4 |

New password: BAD PASSWORD: it is based on a dictionary word Retype new password: passwd: password updated successfully |

After that, I re-added enforce_for_root to the file and clicked RETRY back in VCF and all things are happy once again.

How to fix TPM 2.0 device detected but a connection cannot be established on Dell EMC VxRail nodes

I just built a new environment and was greeted by this error. This fix will likely work on other Dell servers, and the settings may apply to other vendors.

High level is you need to set TPM2 Algorithm Selection to SHA256 in the BIOS. You MIGHT have to turn on Intel TXT, and then enable Secure Boot. This SHOULD NOT impact the ESXi installation, but there is a chance it might. Enabling Secure Boot on a machine with modified or unsigned files carries with it the risk of rendering your machine unbootable with the current ESXi installation.

So, here we go:

How to determine the active edge transport node in NSX-T 3.x

I’m blogging about this because I always seem to forget where to find the status of the Tier-0 Logical Router, basically which edge transport node is Active and which is Standby for that specific Tier-0 Gateway. It’s easy once I remember, but hitting the search engines doesn’t show anything useful, so I’ll try to keyword spam this to get more visibility for the next time I forget.

TL;DR: Switch to Manager mode. Click the Networking tab, Tier-0 Logical Routers, select the T0 you want. Look under High Availability Mode (screenshot below)

What is the problem?

vCenter Server Appliance fails with EXT4-fs journal errors

vCenter’s not responding properly

Scroll down to bottom for TL;DR version

I got a text message this evening from a colleague of mine (@FrankRax) stating our lab was down. I tried to hit the vCenter and the hosts & clusters view wouldn’t load in the web client, just left me with the spinning wheel:

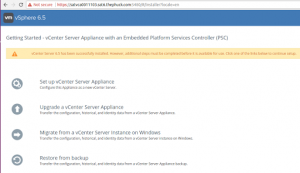

Okay, that’s fine, so I’ll check the VAMI, or Management UI of the VCSA, but then I got really scared when I saw this:

This isn’t a fresh install, it’s been a lab for a long time, actually even upgraded to 6.5u1 not that long ago. Now I know for a fact something’s gone wrong, so I launched the host client on each node in the cluster until I found the vCenter Server Appliance VM and launched the console, and was pretty much horrified at what I saw

the following content may be disturbing to some audiences, viewer discretion is advised